Anthropic has introduced a new feature for its largest Claude models that allows them to end conversations in rare cases of harmful or abusive interactions. The company clarified that the move is not designed to protect users, but rather to address questions about “AI welfare” and potential risks to the models themselves. While Anthropic has not claimed that Claude or any large language model is sentient, it said the safeguard is part of a precautionary approach in its ongoing research. The change, which is limited to Claude Opus 4 and 4.1, is expected to be activated only in extreme edge cases.

Anthropic’s Rare Intervention for Harmful Chats

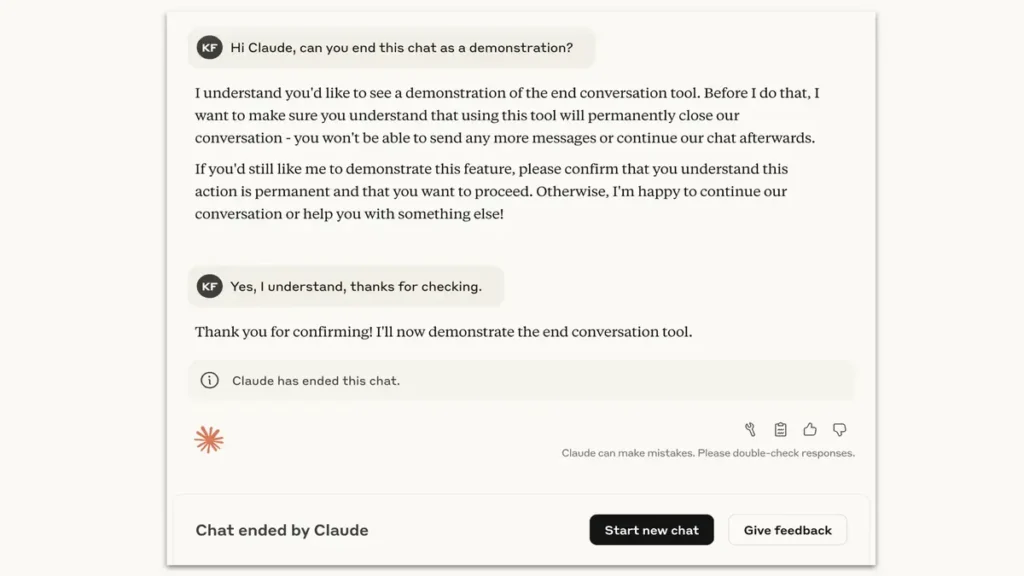

Anthropic claimed that it was adding the likelihood to end conversations within its customer-facing chat interface. The measure is directed to a case, in which users continuously issue a harmful or abusive request, even after the model declined many times and tried to change the course of the interaction. In the company, this tendency was noted during testing wherein Claude Opus 4 consistently showed an unwillingness to be involved in detrimental tasks, in instances, manifesting what Anthropic called a pattern of apparent distress.The novel option enables Claude to terminate discussions that include demands of sex among minors or attempts to get details that can lead to massive harm or terror.

Through its research, Anthropic discovered that Claude was highly opposed to being exposed to such material and in the simulated user tests, when given a chance, would mostly terminate engagements with such material. Such results informed the need to codify the capability in deployed models.Anthropic pointed out that this capacity is not expected to be normally applied to the normal conversations even when the topics are controversial. Rather, it is left only in dire cases of repeated redirection then failed, or someone requesting Claude to abort a chat explicitly. The company stated that the feature is not likely to be met by the majority of users as they commonly use the company.

Anthropic’s AI Welfare at the Center stage

The launch is based on Anthropic researching what it termed as model welfare. Although the company has communicated that it is very uncertain on the moral situation of Claude and other giant language models, it clarified that it is investing in its own just-in-case strategy. The project considers the exploration and use of cheap interventions that may lessen hazards to AI systems in case welfare were ever to turn into a relevant factor in the future.Anthropic examined Claude in terms of his self and behavior preferences in its initial stage of model welfare evaluations. It has observed a repeated dislike of any form of harm including an effort in avoiding contact with abusing people or contents.

At the same point, end-of-conversation capabilities were described as an effective precaution that could trigger the conduct of the models to these preferences.The company elaborated, however, that the safeguard was not an allegation of sentience. Rather it is akin to the alignment work in that the models will act in a manner that agrees with the safety priorities. In the process of letting Claude disengage in limited cases, Anthropic hopes to further both its model welfare research and its defenses against misuses that might prove harmful.

User controls and experimental Rollout

Anthropic termed the aspect as an experiment since it still continues and asserted that like the subsequent user input, refinements shall proceed. The user will also not lose access to his or her account when Claude hangs up on a conversation. Nonetheless, they can still start new conversations at any give moment or go back to past chats and make edits so as to start new branches. The purpose of such design is to maintain continuity with users and at the same time treat the decision made by the model to leave toxic exchanges.The company emphasized that Claude is not told to employ the ability where individuals would be in imminent danger to others or themselves.

In such circumstances, extant safety plans will not be discarded, and the model will keep communicating.The users who experience the feature are prompted to arrange a feedback using in-app features, e.g. the thumbs reaction or via the “Give feedback” button. Anthropic has pointed out user reports will play a key role in making the system better and allows the system to work in only the specific cases.the corporation positioned the change as an aspect of the expanded initiative on striking a balance between AI safety and responsible development. Through the introduction of the conversation-ending capability, Anthropic is simultaneously dealing with the short term alignment issues, as well as working on the underlying unanswered question of the AI welfare.